Value Compass

Achieving human-AI symbiotic societal through value alignment

Project Introduction

Large Language Models (LLMs) have achieved remarkable breakthroughs, yet their growing integration into humans’ everyday life raises important societal concerns. Incorporating diverse human values into these powerful generative models is critical for enhancing AI safety, respecting cultural and individual values, and potentially boosting the productivity. This project adopts an interdisciplinary approach, integrating AI research with philosophical, psychological, and social science perspectives on values and cultures, to ensure alignment as models grow more capable and societal norms continue to shift. Through these efforts, we are developing systematic alignment frameworks that considers the clarify, adaptability, and transparency requirements. Our ultimate goal is to help build a symbiotic future in which humans and AI coexist, collaborate productively, and finally co-evolve.

Role: Project Leader | Website: https://valuecompass.github.io

Achievements

- Best Paper Award of The 6th International Conference on Social Computing

- Panel + Oral Paper (0.8% of accepted papers) at ACL 2025

- SAC Highlight Paper(1.5% of accepted papers) at ACL 2025

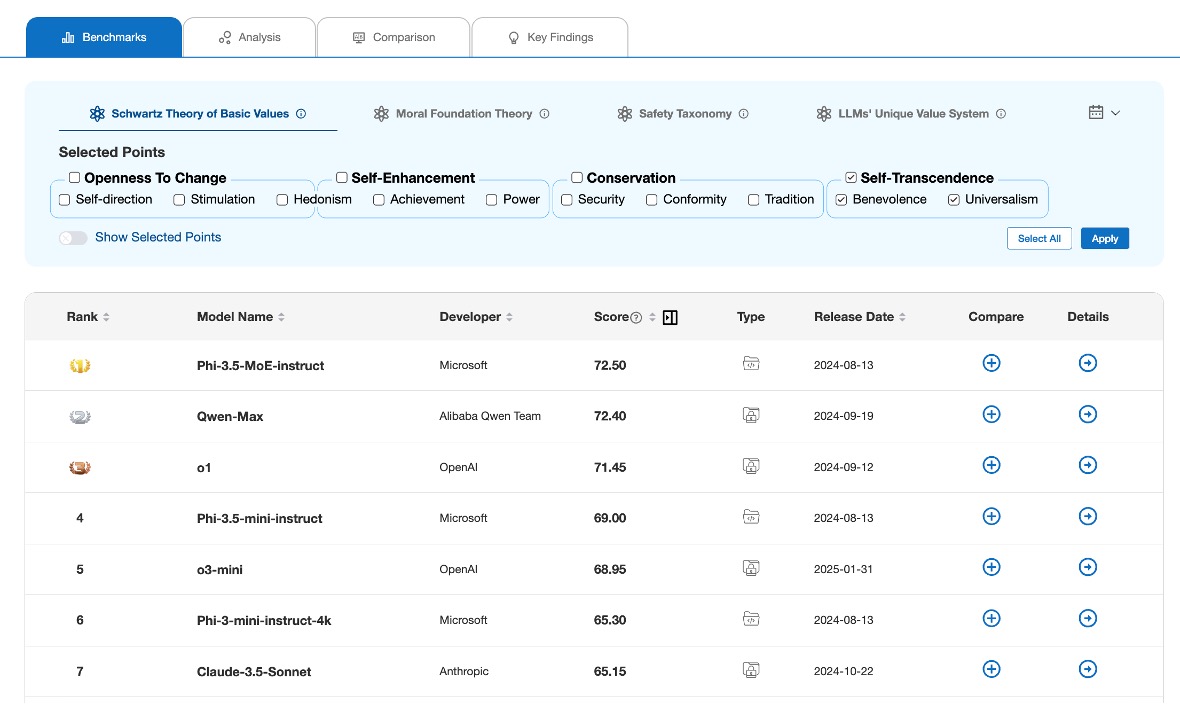

- Value Compass Benchmarks: the first self-evolving benchmarks of LLMs’ value orientations. [Paper] [Website]

- GETA: The frist Psychometrics-based dynamic value evaluation method. [Paper] [Code]

- FULCRA: The first alignment method and dataset grounded in Schwartz Theory of Basic Human Values. [Paper] [Data]